Many people now rely on AI tools to handle tasks that once required their full attention.

Drafting documents, summarizing information, organizing ideas, or generating first versions of work can now happen in seconds. Instead of doing every step themselves, individuals increasingly ask a system to produce part of the output.

At first this feels like simple automation.

But structurally, something more significant is happening: cognitive work is being redistributed between human and machine nodes.

Systems Layer

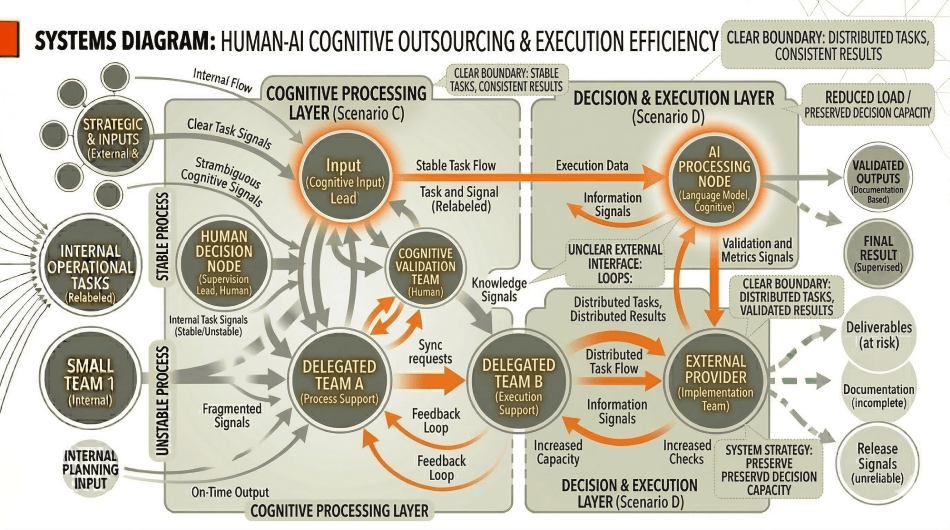

Human-AI collaboration can be understood as a form of cognitive outsourcing.

In traditional outsourcing, operational tasks move from an internal role to an external provider. In human-AI systems, portions of cognitive processing move from the human node to an AI processing node.

These tasks may include:

- information synthesis

• language generation

• pattern recognition

• structured reasoning

• idea expansion

The AI node processes inputs provided by the human and returns outputs that the human can review, modify, or integrate into the larger workflow.

However, while AI can absorb portions of the processing load, structural accountability remains with the human node.

The human role interprets context, defines the objective, evaluates outputs, and determines how those outputs influence system decisions.

The AI node expands cognitive capacity but does not replace outcome ownership.

Structural Translation

In simple terms, AI tools act like a new type of assistant for thinking work.

Instead of doing every step yourself, you can pass certain mental tasks—such as drafting, summarizing, or organizing information—to the AI.

The AI produces a result, which you then review and refine.

The thinking process becomes shared between the human and the tool.

But the human still decides what the system is trying to accomplish and whether the output is correct.

Structural Implication

When human-AI systems are poorly structured, two problems commonly appear.

First, individuals may treat AI outputs as final results rather than intermediate processing. This can introduce errors because the AI lacks full contextual awareness of the system’s objectives.

Second, individuals may spend excessive time supervising AI outputs if prompts, expectations, or evaluation criteria remain unclear.

In both cases, the system fails to distribute cognitive load effectively.

Human-AI collaboration becomes efficient only when the system clearly defines what cognitive tasks move to the AI node and what decisions remain with the human node.

Leverage Insight

Within the Outsourcing and Load Distribution pillar, AI represents a new form of distributed cognition.

When designed properly, human-AI systems redistribute mental processing across multiple nodes while preserving human accountability for outcomes.

This expands system capacity without dissolving responsibility.